Selected Writing

This section brings together a selection of writing that reflects my ongoing thinking about pedagogy, professional judgement, and institutional change in higher education. These pieces span reflective essays, invited articles, and longer-form analysis, and are intended to contribute to sustained sector conversations rather than timely commentary.

This space brings together longer reflective thought pieces and links to external commentary. The focus is on how universities exercise judgement, design for learning quality, and govern uncertainty in changing conditions.

Featured Linkedin Insight Published January 2026:

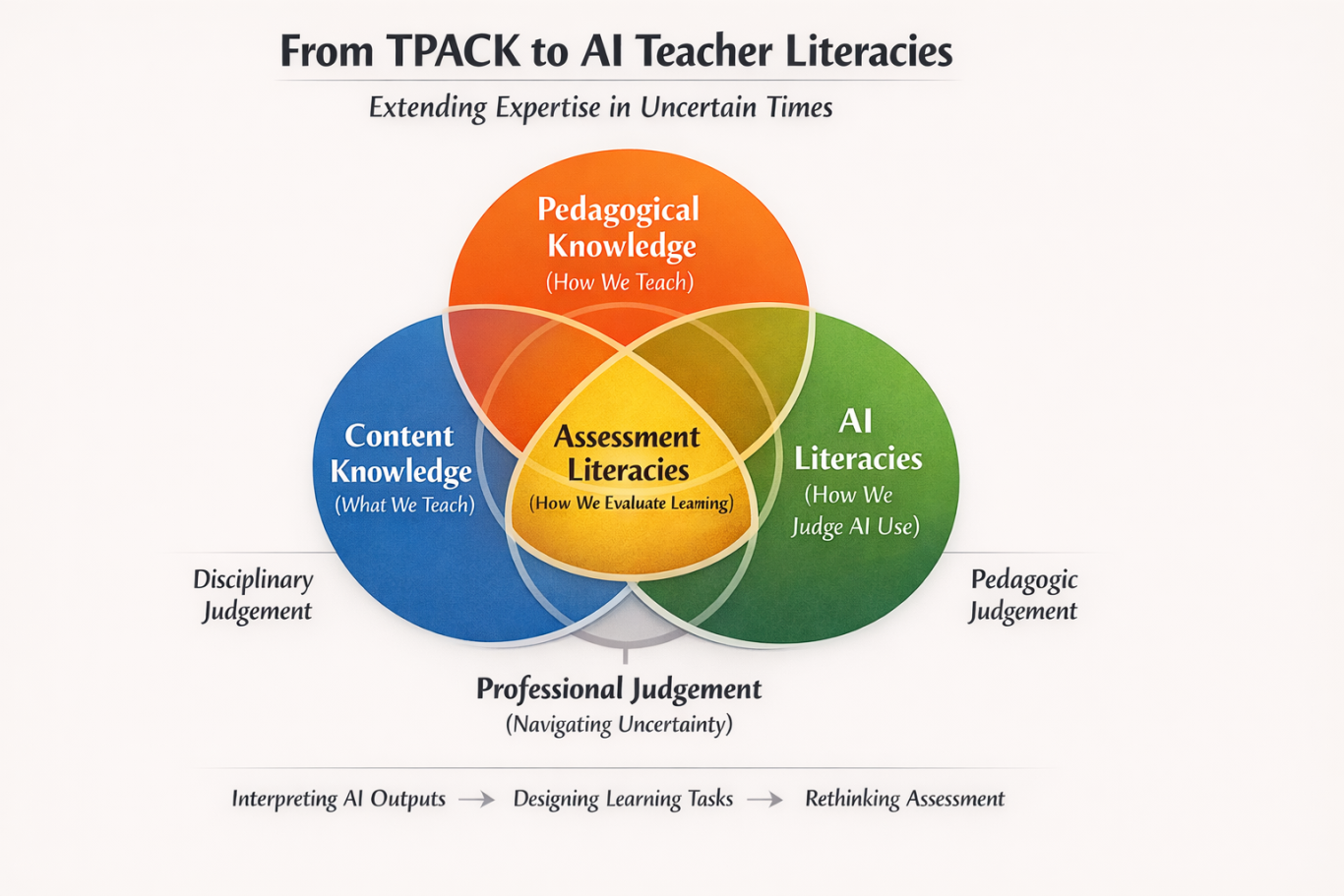

From TPACK to AI Teacher Literacies: Extending a framework for professional judgement

This piece revisits the Technological Pedagogical Content Knowledge (TPACK) framework as a way of thinking about professional judgement in teaching, asking what happens when the “technology” involved is no longer simply a teaching aid but a system that actively produces knowledge-like outputs. It explores how generative AI unsettles long-standing assumptions about pedagogy, assessment, and academic responsibility, and argues for understanding AI teacher literacies as judgement literacies rather than skills or competencies.

In my doctoral work, I was particularly interested in how TPACK develops within communities of practice: how educators learn to make pedagogic decisions that feel meaningful, sustainable, and grounded in disciplinary knowledge. TPACK resists the idea that effective teaching can be reduced to tools or techniques. Instead, it foregrounds judgement: knowing what to do, why, and when, in a specific educational and disciplinary setting.

As generative AI has moved rapidly into everyday academic practice, I have found myself returning to TPACK with a renewed question. Not whether the framework still holds — I believe it does — but what happens to TPACK when the “technology” involved is no longer just a teaching aid, but a system that actively produces knowledge-like outputs

Why AI unsettles the “T” in TPACK

In classic TPACK terms, technological knowledge refers to understanding how particular technologies afford or constrain pedagogic approaches and content representation. This framing works well when technologies are largely instrumental: virtual learning environments, lecture capture, polling tools, simulations.

Generative AI is different.

AI does not simply support teaching activities; it participates in them. It generates text, explanations, code, images, and feedback that can closely resemble the outputs we have traditionally treated as evidence of student learning. As a result, AI does not sit neatly alongside pedagogy and content. It cuts across them, reshaping their intersections.

This is why staff unease around AI is often mischaracterised as a skills or confidence issue. What is being unsettled is not familiarity with tools, but confidence in professional judgement. Decisions that were once implicit about effort, authorship, understanding, and progression are now exposed and must be made explicit.

This is where the idea of AI teacher literacies becomes useful, provided we are clear about what kind of literacy we mean.

AI teacher literacies as an extension of TPACK

Rather than treating AI literacy as an additional competence to be bolted on, it is more productive to use TPACK as a lens for examining how AI reshapes the intersections between content, pedagogy, and assessment.

From this perspective, AI teacher literacies are not separate from subject or pedagogic literacies. They are about how judgement is exercised within them, under new conditions of uncertainty.

AI and subject (content) literacies

Within TPACK, content knowledge is fundamentally disciplinary. It involves understanding what counts as legitimate knowledge, explanation, argument, or evidence in a particular field.

AI teacher literacy at this intersection is not about knowing what AI can generate, but about judging how AI aligns with, simplifies, or distorts disciplinary ways of knowing. In many subjects, AI reproduces surface knowledge convincingly while struggling with interpretation, uncertainty, or value-based judgement. In others, it challenges long-held assumptions about originality, method, or expertise.

This means subject expertise becomes more important, not less. Teachers need to judge which aspects of disciplinary learning are vulnerable to automation, which may be amplified by AI, and which remain irreducibly human, contested, or contextual.

AI and pedagogic literacies

Pedagogic knowledge in TPACK concerns how learning is designed, scaffolded and supported.

AI complicates this by redistributing effort. Tasks that once required extended drafting or practice can now be completed quickly with AI assistance. Feedback cycles can be accelerated, but also flattened. Struggle long understood as part of learning becomes harder to see.

AI teacher literacy here involves design judgement: deciding when AI functions as a cognitive scaffold, when it becomes a shortcut, and when it undermines the learning intention of a task. These decisions are necessarily situated. They depend on subject, level, cohort, and purpose.

This aligns closely with the work of Luckin (2024), whose research frames AI not as automation but as intelligence amplification. Her work emphasises that AI reshapes how thinking and responsibility are distributed between people and systems. From this perspective, the pedagogic question is not whether AI is used, but how responsibility for thinking, sense-making, and decision-making is shared.

AI and assessment literacies: the missing intersection

Assessment has always been the stabilising force within teaching systems. It is where pedagogy, content, and academic standards meet.

AI destabilises this stability. When AI can generate plausible outputs, traditional assumptions about what assessment evidences become fragile. The question shifts from “can this be completed with AI?” to “what does this assessment allow me to judge?”

AI teacher literacy at this intersection is about maintaining the legitimacy of academic judgement without defaulting to surveillance or prohibition. It involves designing assessment that makes disciplinary thinking, decision-making, and judgement visible — even when AI is present.

In this sense, AI exposes assessment as the real centre of the teaching system, rather than pedagogy alone. This is often uncomfortable, but also clarifying.

AI teacher literacies as judgement literacies

Across these intersections, a consistent pattern emerges. AI teacher literacies are not primarily about tool use. They are about the capacity to exercise professional judgement under conditions of uncertainty.

This includes:

judging when AI use supports learning and when it substitutes for it

explaining those judgements transparently to students

working with partial evidence rather than waiting for late certainty

balancing care, trust, and academic standards

AI does not introduce judgement into teaching; it makes judgement visible. Decisions that were previously embedded in routines or conventions can no longer remain implicit.

Seen this way, AI does not add a new literacy alongside pedagogy and content. It exposes the judgements that were always present, but now must be articulated, defended, and shared.

An invitation to think together

I am currently developing work with colleagues that uses this framing as a starting point rather than a finished model. The aim is not to define what AI teacher literacies should be, but to surface where professional judgement now feels most exposed, least supported, or most contested.

Questions I am holding open include:

Where do you feel most uncertain about your academic judgement now, compared to two years ago?

Which intersections subject, pedagogy, assessment feel most disrupted in your context?

What do institutions underestimate about the impact of AI on teaching quality?

By grounding AI teacher literacies in established pedagogic frameworks such as TPACK, my hope is that we can move the conversation away from skills checklists and towards shared professional sense-making.

This feels like the work ahead: not mastering AI, but learning how to judge well, together, when the ground beneath teaching practice is shifting.

References / further reading

Mishra, P. & Koehler, M. J. (2006). Technological Pedagogical Content Knowledge: A Framework for Teacher Knowledge.

Koehler, M. J., Mishra, P., & Cain, W. (2013). What is Technological Pedagogical Content Knowledge (TPACK)?

Luckin, R. (2018). Machine Learning and Human Intelligence: The Future of Education for the 21st Century.

Luckin, R. (2022–2024). Selected work on human-centred AI in education.